Build, train, deploy and scale physical AI for the factory

Building AI is hard. Running it in the factory is harder.

AI models are typically developed and validated in controlled environments — simulations, lab setups, or offline pipelines where physics is simplified, sensors are clean, and timing is flexible.

The factory operates under a different reality. Robots must respond in real time. Motion, force, vision, and state are tightly coupled. Contact dynamics evolve continuously. Sensors introduce noise and latency. Safety systems intervene by design.

In this environment, every control cycle must be deterministic (without retries, stalls, or dropped frames) because physical systems do not pause or reset.

Where physical AI breaks down

In production, the challenge is not modelling intelligence. It is executing it under real constraints.

Systems that perform well in controlled environments often struggle on the factory floor because their execution architecture is not designed for real robots:

- Abstracted physics fail in contact‑rich, force‑sensitive tasks

- External compute can introduce latency and synchronization issues

- Perception, planning, and control are decoupled from real‑time motion

- Moving from simulation to deployment requires rebuilding infrastructure

- Reliability, safety, and recovery exist outside the core system

Without an execution layer designed for real‑time, embodied operation, physical AI remains confined to experimentation rather than something that can be reliably deployed, scaled, and trusted in production.

Universal Robots: The execution foundation for physical AI

The platform is designed so AI operates alongside real‑time control loops, informing and adapting robot behavior, while execution remains governed by deterministic, safety‑certified control with direct access to force, motion, sensing, and timing constraints that define factory environments.

These execution capabilities are exposed to developers through interfaces such as ROS, URScript, PolyScope X, and Direct Torque Control. URCaps extend these capabilities to users and operators at runtime, enabling configuration, orchestration, and interaction within production environments.

Platform capabilities

From development to deployment, the execution layer supports every stage of building, running, and scaling physical AI.

Develop

- PolyScope X Unified, AI‑ready control environment with open APIs for integrating perception, planning, and learning alongside robot execution.

- ROS 2, Python, C++ Native integration with modern robotics and AI stacks, allowing developers to use familiar tools and workflows without adapting to proprietary systems.

- Simulation assets and calibration access Factory‑calibrated kinematics, dynamics, and simulation models available for planning, learning, and sim‑to‑real validation.

Execute

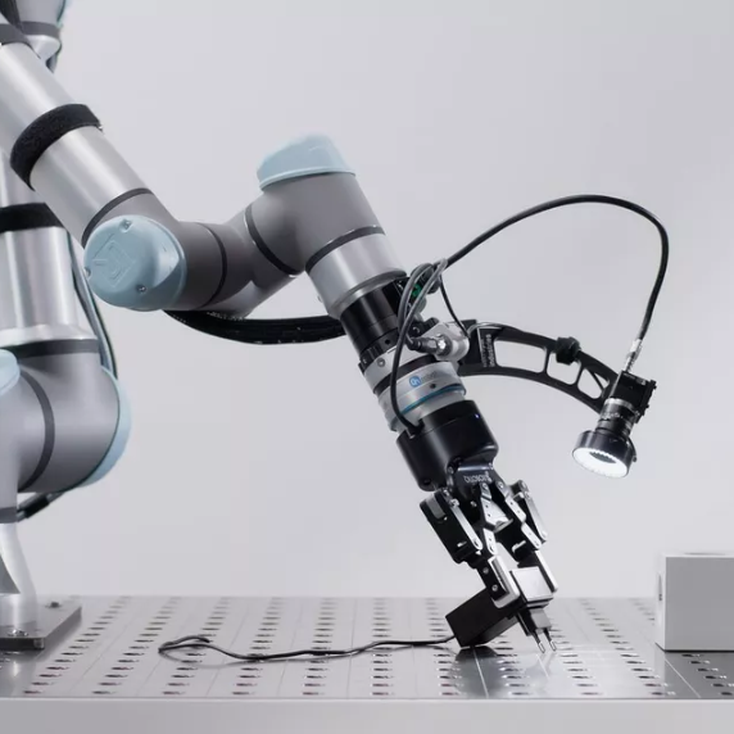

- 500 Hz real‑time control Deterministic, controller‑executed motion and force loops that ensure predictable timing and repeatable physical behavior.

- Direct torque control Low‑level access to joint torques and robot dynamics, enabling compliant, contact‑rich, and learning‑based control strategies.

- Force‑torque sensing Integrated force and torque signals exposed through real‑time interfaces, allowing AI to reason about interaction, not just position.

Commercialize

- URCaps and Smart Skills Package AI functionality into deployable, operator‑ready capabilities that integrate directly into UR workflows.

- Partner ecosystem and go‑to‑market Leverage the UR+ ecosystem, integrators, and solution partners to productize, deploy, and scale AI‑enabled applications.

- Licensing and modular deployment Control access to advanced capabilities while supporting scalable rollout across robots, cells, and sites.

- Certified deployment and safety TÜV‑certified safety enforced beneath the AI layer, enabling experimentation, validation, and scale without breaking industrial constraints.

Minas Liarokapis, CTO, AcuminoI was amazed by the speed of the AI Accelerator, and now I regret the time I spent developing some solutions internally.

A growing product portfolio for AI developers

As physical AI matures, our product portfolio is expanding to support developers across the core stages of execution, data, and deployment.

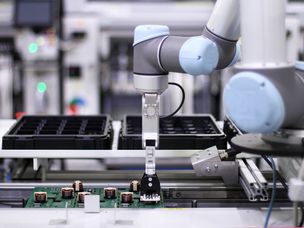

AI Accelerator

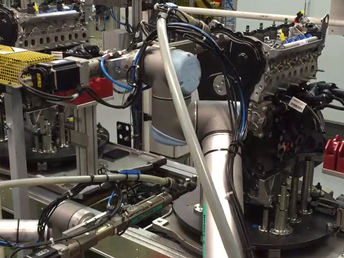

UR's AI Accelerator makes it easier to bring AI into real robotic applications, combining industrial compute, software, and robot access into a single platform.

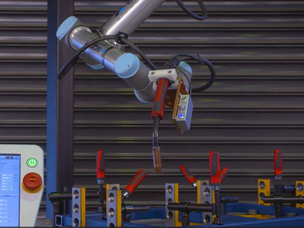

UR AI Trainer

The UR AI Trainer is a training system that helps AI developers and companies prepare models for industrial deployment by capturing high‑quality, real‑world interaction data directly on production‑grade robots.

Anders Billesø Beck - Vice President, AI Robotics ProductsUR+ provides a pre‑integrated AI ecosystem that runs directly on the Universal Robots execution layer. Build on components that already work together, instead of assembling infrastructure from scratch.

Build faster with a ready‑made developer ecosystem

Universal Robots provides a complete developer ecosystem around the execution layer, so you can focus on building AI, not assembling infrastructure.

From open‑source frameworks to pre‑integrated partners and production‑ready tooling, your AI stack is already integrated.

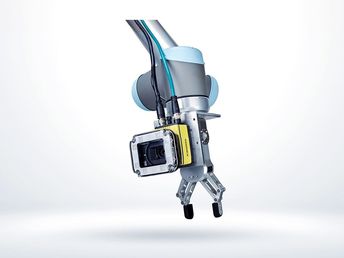

UR+

A curated ecosystem of hardware and software built to run directly on the UR execution layer.

UR+ components are tested, validated, and production‑ready, reducing the need to build and maintain custom drivers, safety logic, and interfaces. For developers, this means faster iteration and a shorter path from prototype to deployment.

ROS community

Native support for ROS 2 with maintained drivers and integration paths that preserve deterministic robot execution.

Develop AI using standard ROS tooling for perception, planning, and learning, while UR handles real‑time motion, force control, and safety on the controller. This separation lets AI and robotics scale together without compromising reliability

URSim

URSim is Universal Robots’ offline simulation environment, allowing developers to test, debug, and validate robot programs without access to physical hardware. It mirrors the behaviour of UR controllers, enabling safe iteration and early validation before deployment.

Client libraries

Official, UR‑maintained client libraries provide low‑latency access to robot state, control, and telemetry, and are kept in sync with the latest controller updates.

Through C++ libraries, RTDE, and ROS interfaces, developers can stream real‑time data, run external control loops, and integrate AI systems with confidence that ROS‑based integrations remain aligned with current controller behaviour—without reverse‑engineering protocols or building custom communication layers.

Developer tools and docs

A comprehensive set of tools, simulation assets, and documentation supporting development across the full lifecycle.

From simulators and SDKs to examples, reference architectures, and community support, the UR Developer Suite gives teams a consistent foundation for building, testing, and scaling physical AI.

UR Forum

The UR Forum is the official Universal Robots community space where developers, integrators, and partners share knowledge, ask questions, and troubleshoot real‑world automation challenges. It provides direct access to best practices, examples, and guidance from both the community and UR.

Get in touch with Universal Robots

Get in touch to explore tools, execution capabilities, and platforms designed to run AI reliably on real robots in production.