Why direct torque is the missing signal in imitation learning

Imitation learning models trained only on motion and vision break down when they reach the real world. Direct Torque Control exposes force and physical interaction as first‑class signals for a more reliable transition from lab to factory.

As robotics AI moves beyond perception and planning into physical interaction, one limitation keeps surfacing in labs and pilot deployments alike.

Robots trained on motion and vision alone struggle when real contact begins.

Insertion, assembly, polishing, and handovers are not defined by where a robot moves, but by how it interacts with the world. Force, compliance, and physical response determine success, yet most learning pipelines still treat them as secondary effects.

Direct Torque Control exists to change that.

From motion replay to physical interaction

Most industrial robots expose control at the position or velocity level. That abstraction works for deterministic automation, but it hides the robot’s true dynamics from learning systems.

For imitation learning, this becomes a structural limitation.

Human demonstrations encode physical intent:

- How force ramps during insertion

- How resistance is handled under misalignment

- How contact is modulated when tolerances vary

If those signals are invisible to the robot, they are lost to the model.

Direct Torque Control exposes low-level torque control and calibrated robot dynamics, allowing learning systems to reason about force and interaction at the end effector, rather than replaying motion trajectories.

Why this matters beyond the lab

Many imitation learning pipelines rely on VR controllers, kinematic teleoperation, or research only hardware. These approaches often lack force fidelity and do not reflect production conditions.

The result is familiar:

- Clean demonstrations

- Promising models

- Fragile deployment

Direct Torque Control shifts imitation learning onto industrialgrade robots, where demonstrations capture real interaction and physical constraints from the start.

What Direct Torque Control enables for AI model learning

Direct Torque Control is a low-level interface in Universal Robots’ software stack that allows developers to command robot torques directly through a low-level control interface, instead of positions or speeds.

For model learning, this enables three things that higher level control cannot:

- Force becomes a first-class learning signal. Contact is no longer a side effect of motion. Models can observe, learn from, and act on force, pressure, and compliance during real interaction.

- Learning on real robot dynamics. Developers gain access to calibrated robot dynamics — mass matrices, Jacobians, Coriolis terms, joint feedback — and can build controllers and learning models on top of real industrial physics, not approximations.

- Continuity from simulation to deployment. Training and execution run on the same control stack and hardware, allowing models developed in simulation or research environments to transfer directly to real robots without rewriting the control layer. Crucially, Direct Torque Control adds compliance and realtime force response, so models do not need to be perfect upfront. When the robot makes contact with the real world, torque sensing and control allow it to adapt dynamically, reducing simtoreal friction and making deployment more robust in unstructured or contactrich tasks.

This combination is what allows learning systems to survive contact outside controlled demos.

Direct Torque Control inside UR’s Imitation Learning System

Direct Torque Control is a foundational capability within Universal Robots AI Trainer.

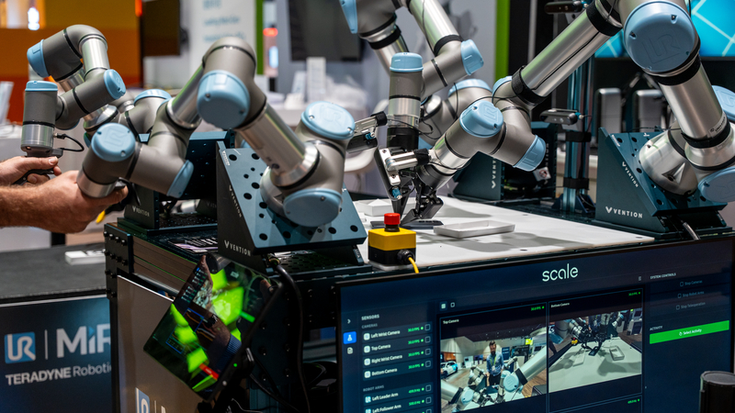

In the leader–follower setup:

- Human operators guide leader robots through real tasks

- Follower robots mirror motion in real time

- Demonstrations capture synchronized motion, force, and vision data

Torque-level access ensures demonstrations include contact, compliance, and physical response, producing datasets suitable for training and finetuning modern manipulation and Vision Language Action (VLA) models.

Crucially, this happens on the same robots intended for deployment.

Built for Physical AI, not just experimentation

As Physical AI matures, force will not be an edge case. It becomes the signal that separates labscale experiments from systems that operate reliably on the factory floor.

Direct Torque Control makes that signal accessible today, combining realtime torque feedback and compliance with industrial grade safety, reliability, and uptime.

For teams building imitation learning pipelines, this is the difference between models that look promising in controlled settings and systems that can be reinforced, deployed, and scaled in production environments.