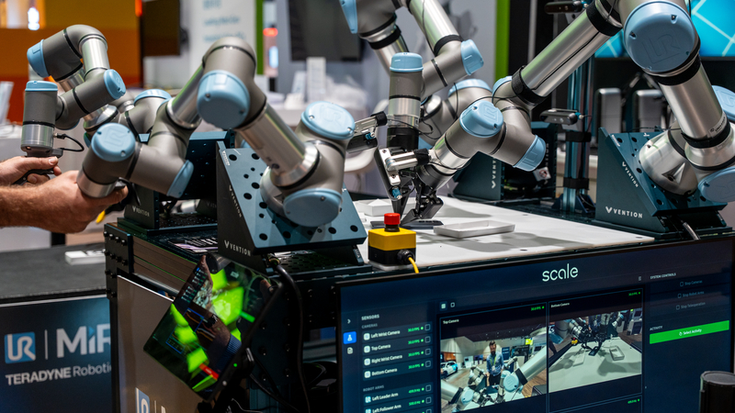

Introducing the new UR AI Trainer

AI training on industrial robots.

Many AI models fail to reach production not because of the algorithm, but because the training setup does not reflect industrial reality.

Training on lightweight research arms or vision‑only systems limits what models can learn about contact, compliance, and interaction. When those models are transferred to industrial robots, performance breaks down.

Universal Robots’ AI Trainer is designed to solve this problem at the source.

Force‑aware learning

Integrated force and torque sensing, combined with Direct Torque Control, allows models to learn what the task feels like, not just how it looks or where the robot moves.

Real industrial dynamics

Train on production‑grade robots with accurate kinematics, calibrated dynamics, and stable control. Models are exposed to the same physics they will encounter in deployment.

Designed for deployment

With over 100,000 robots deployed globally, UR provides an industrial platform that supports long‑running experiments, repeatability, and a realistic path from lab to factory.

Anders Billesø Beck, VP of AI Robotics Products, Universal Robots.The AI Trainer gives AI teams the confidence to focus on building better models, knowing the data they gather will carry through to real industrial conditions. It also empowers industrial teams to capture the right use-case data and fine-tune models so they’re truly ready for deployment.

What our system enables

High‑fidelity task data

Capture real task demonstrations that include contact, compliance, and interaction, not just motion paths.

VLA model training

Generate datasets suitable for training and fine‑tuning Vision‑Language‑Action models for physical task execution.

Lab‑to‑factory continuity

Train on the same industrial robots you intend to deploy, reducing sim‑to‑real friction.

How the system works

What’s included in the system

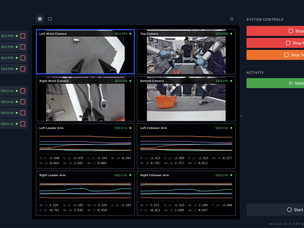

Recording data for model training with Scale AI software

The Scale AI software enables end users to seamlessly capture high-quality multimodal data for Physical AI development. During each demonstration collected on UR AI Trainer, the Scale software records synchronized motion, force, and visual data, producing structured datasets required to train Vision-Language-Action (VLA) models.

This allows for scalable data capture across live UR robot deployments and creates continuous feedback that accelerates the development and optimization of physical AI systems.

Leveraging UR AI Trainer and Scale’s software, Universal Robots and Scale AI will release a large-scale industrial dataset later this year.

Who this is for

AI and robotics research teams working on manipulation and Physical AI Advanced industrial R&D groups Technology partners developing AI models System integrators working on learning‑based automation

Get started with UR AI Trainer

Explore the Universal Robots' AI Trainer on the UR Marketplace or speak with an expert by filling the form below.

Get started with UR AI Trainer

Complete the form to speak to an expert about your application, data strategy, and setup.

- Universal Robots USA, Inc

- 27175 Haggerty Road, Suite 160

- 48377 Novi, MI